When teams talk about accuracy casually, they often mean very different things. Some mean a few spot checks. Some mean a software report. Some mean a tolerance they hope the project achieved. That ambiguity is exactly why formal standards matter.

For Aerotas, the most useful benchmark has long been the ASPRS Positional Accuracy Standards for Digital Geospatial Data. These standards provide a structured way to measure and report accuracy rather than relying on marketing claims or informal assumptions.

Why ASPRS matters

The ASPRS standards are widely respected because they focus on independent measurement. They are not about whether a model "looks right." They are about whether the mapped positions agree with reliable points measured separately from the model itself.

That rigor is part of what makes the standards valuable and also part of what makes them difficult for many people. They are more academic than most day-to-day site workflows, and many teams need a simpler explanation before the standards become practically useful.

Core ideas behind the standards

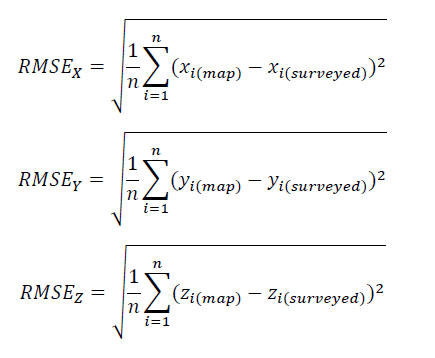

- Accuracy is measured statistically. RMSE is used to describe the difference between modeled points and independently measured points.

- Checkpoints must be independent. If a point was used to create or anchor the model, it is not an independent checkpoint.

- Horizontal and vertical accuracy should be considered separately. They behave differently and should not be blended into a vague single claim.

- The check data must be better than the aerial mapping system. Otherwise the test is not strong enough to prove much.

Ground control points are not checkpoints

One of the most common mistakes in drone surveying is trying to treat the same point as both a ground control point and a checkpoint. That does not work. A ground control point helps build or align the model. A checkpoint tests the finished model from outside that process.

If all your points are ground control, then you may have georeferenced the project, but you have not independently proven its true accuracy.

What should be reported

Good reporting should make it easy to answer a few basic questions:

- What standard was used?

- How many checkpoints were held out from the modeling process?

- How were those checkpoints distributed across the site?

- What was the measured horizontal error?

- What was the measured vertical error?

This matters because a single headline number can hide important context. A project can perform well in one part of the site and poorly at the edges. It can have strong relative fit but weak absolute placement. Clear reporting prevents that ambiguity.

If you are buying drone-survey work: ask for the standard used, the number of independent checkpoints, and separate horizontal and vertical results. If those details are missing, the accuracy claim is incomplete.

Why this is still practical

The ASPRS standards can feel academic, but the underlying lesson is simple: accuracy should be measured independently and reported clearly. Even teams that do not implement every nuance of the standard should still absorb that core discipline.

For surveyors and engineers, that discipline is not optional. If a project will be relied upon for design, staking, or certification decisions, the team needs a defensible explanation of how accuracy was checked.

Bottom line

The gold standard is not a software estimate or a promise that everything processed green. The gold standard is an accuracy statement tied to an accepted methodology, backed by independent checkpoints, and communicated clearly enough that another professional can understand what was tested and what was not.

Read the ASPRS Positional Accuracy Standards if you want the source document Aerotas has historically relied on.